The Inevitable Token War: Compute's Doomsday Arms Race

Tokens are the “digital oil” of the AI era

A war is brewing—not for land or oil (though both will factor in), but for a commodity no one knew existed just years ago. Compute tokens: the digital fuel powering AI’s rise. The power of these tokens? It will rival a nation's nuclear arsenal in geopolitical dominance.

AI has infested every corner of our lives. Social media? A cesspit of AI slop—mindless videos, posts, and imagery churned out by the second. Every major brand shoehorns the "AI revolution" mantra into their ads, regardless of relevance or need. And the media? They gleefully hammer home that you'll be unemployed by Christmas, courtesy of the robots.

All this? Pure fad—and you'd be spot on to dismiss every bit of it. But don’t be fooled: the world is changing rapidly. The real shift is underway, and it's ruthless. Large Language Models (LLMs) are gutting every major sector—from coding and copywriting to medicine and manufacturing. What makes it terrifying? LLMs don't sleep. They iterate 24/7, compounding improvements exponentially. Change won't be linear; it'll be parabolic—a hockey-stick curve that leaves laggards in the dust.

What Are Tokens & Why Are They Scarce?

A “token” is roughly a chunk of a word — sometimes a whole word, sometimes just a piece of one. When you type a message to Claude, it gets broken down into these tokens, and the model has to do maths on every single one. So tokens are effectively a proxy for how much compute (processing power) a query uses. More tokens in, more compute required.

To grasp just how much processing power we are talking about consider this: training GPT-4 required roughly 10,000,000,000,000,000,000,000,000 individual calculations. That's ten septillion operations. To actually run that in a reasonable timeframe (weeks rather than centuries), you need thousands of high-end GPUs running in parallel, 24/7, consuming megawatts of electricity. And remember, that’s just the training.

Once a model is trained and ready for public queries. With hundreds of millions of users sending queries every day, the total processing demand pushes into exaFLOP territory — that’s a billion billion calculations per second, sustained. To put that in context, the world's fastest supercomputer peaks at about 1.2 exaFLOPs. A single popular LLM serving its users needs roughly that same horsepower, running flat out, all day…. and this number is only set to grow.

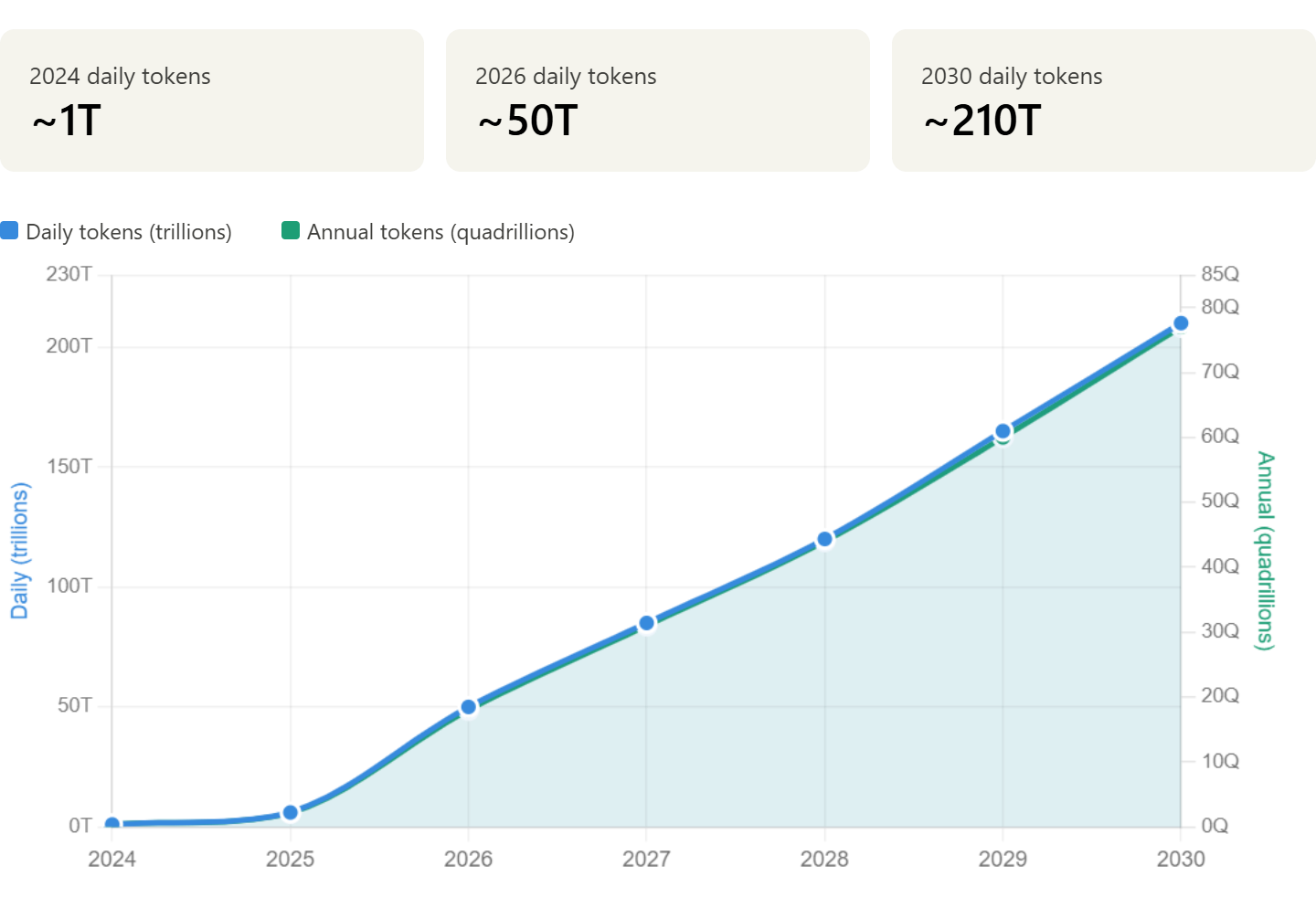

Token usage projection showing 210x growth from 2024 to 2030

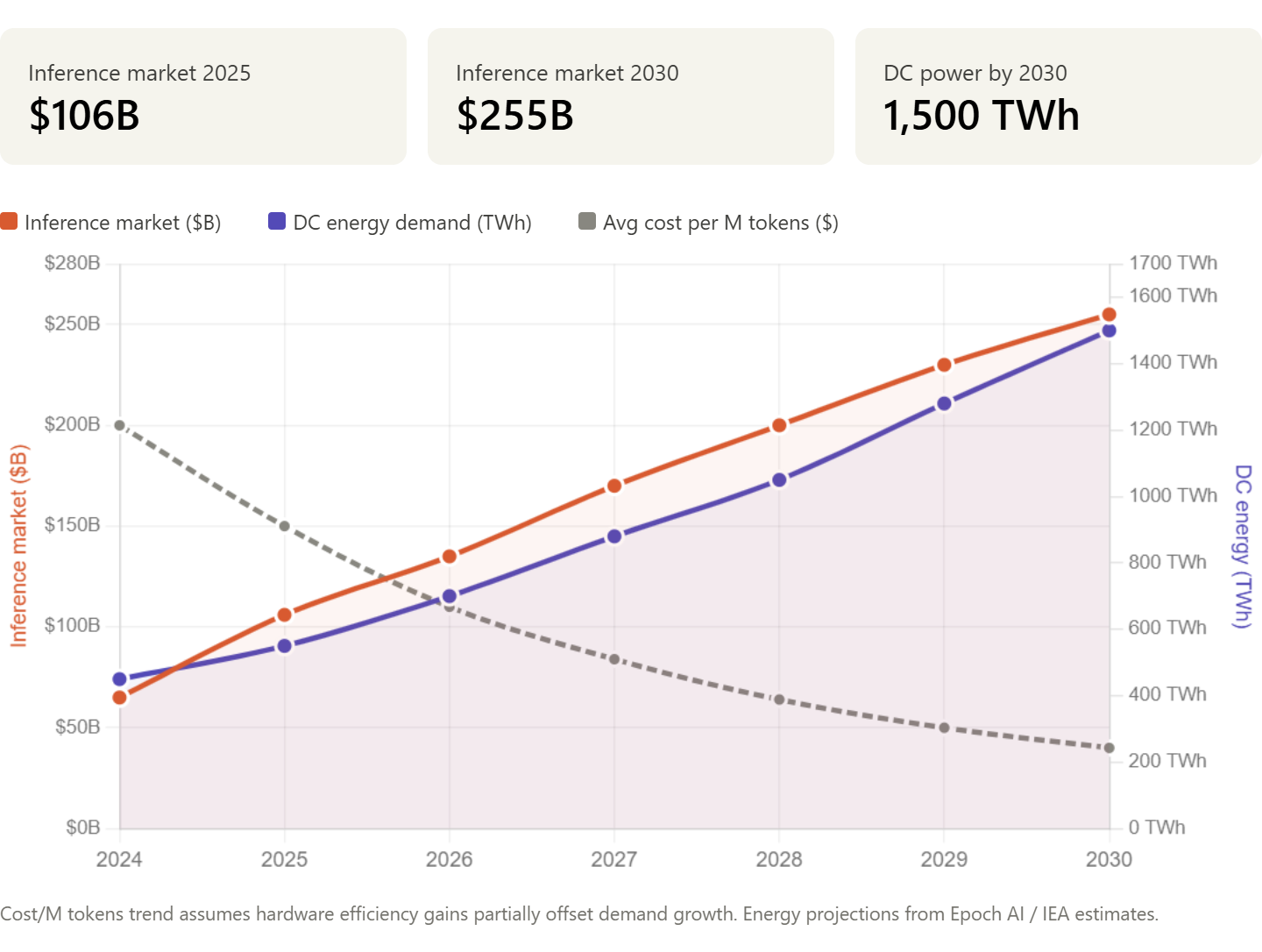

Inference market size, data centre energy demand, and the average cost per million tokens

When we say inference we are talking about the process of processing your input queries. So inference is the machine and the tokens are what we feed into the machine for processing. Think of tokens as the currency and inference as the cost of minting them.

But here’s the kicker: that currency’s about to get scarce as hell. These three bottlenecks will ration them like 1970s oil.

Chip Manufacturing Bottleneck: TSMC’s Stranglehold

Over 90% of the world’s bleeding-edge chips — Nvidia’s H100s, the Blackwell beasts, Apple’s silicon — pour from one spot: TSMC in Taiwan. One company, one island, one earthquake zone sitting in the middle of the most volatile geopolitical corridor on the planet. What could possibly go wrong?

The entire AI revolution — every chatbot, every autonomous drone, every billion-dollar data centre — runs on chips that come from a single point of failure. If China blockades Taiwan tomorrow, or a typhoon flattens the wrong fab, the global AI supply chain doesn’t slow down. It stops.

And it’s not like you can just spin up a new chip factory. A cutting-edge fab takes three to five years to build, costs upward of $20 billion, and requires engineering talent that takes a generation to develop. The US is throwing $52 billion at the problem through the CHIPS Act, with TSMC building new fabs in Arizona, but those won’t hit full production at the most advanced nodes until 2027 at the earliest. Intel is scrambling with its 18A process in Oregon. Samsung is pushing for 2nm in Texas. But right now, today, if you want the chips that train and run frontier AI models, you’re queuing at TSMC’s door along with every government, hyperscaler, and defence contractor on Earth.

This isn’t a supply chain. It’s a chokepoint. And everyone who matters knows it.

The Energy Bottle Neck

But even if you solve the chip problem — even if every fab on the planet is by some miracle running at full tilt — you hit the next wall almost immediately. Power.

AI data centres are already consuming roughly 1% of global electricity. By 2027, the IEA projects that figure hits 2%. That might not sound like much until you realise what 1% of global power actually looks like — it’s the entire electricity consumption of a mid-sized country, just to keep the servers humming.

Training a single GPT-4 class model chews through around 50 gigawatt-hours of energy. That’s roughly what a nuclear submarine’s reactor puts out over its operational cycle. And that’s just training — the one-off learning phase. Inference, the bit that never stops, scales worse. Every query, every user, every day, the meter keeps ticking. And the meter is getting bigger.

The grids aren’t ready. Northern Virginia — home to the densest concentration of data centres on the planet — is already running at 40% capacity. There’s physically not enough power to plug in more racks. The same story is playing out across the EU, where the choice is increasingly becoming: do we power the AI cluster, or do we keep the lights on for the town next door?

And the fix isn’t quick. You can’t just flip a switch. Renewables are scaling, but not fast enough. New nuclear takes a decade to permit and build. Fossil fuel expansion is politically radioactive in half the world. The infrastructure that powers AI is stuck behind a five-to-ten year buildout cycle, while demand is doubling every twelve months.

So now your tokens aren’t just limited by how many chips you can make. They’re limited by how many watts you can pull from the grid to run those chips. Every token has an energy cost baked into it — and when the power runs out, the tokens stop flowing. It’s not a metaphor anymore. Tokens are energy-backed scarcity, and we’re heading for the AI equivalent of the 1970s oil shock.

Next up…

Datacenters: Shovels in Molasses

So let’sjust imagine you got your chips in hand, juice at the ready - what now? Time to build a datacentre. Now for anyone that doesn’t know these things are hyperscale monsters—think xAI’s Memphis behemoth and OpenAI’s Stargate fantasy. You’re looking at 3 -5 years from blueprint to buzz. The first couple year are spent hustling over permits, zoning fights and NIMBY whines. Then time for the hard work: custom substations, mile-long cables, cooling towers and dwarf skyscrapers.

Nvidia CEO Jensen Huang calls ’em “AI factories”—bookings clogged 18-24 months deep. Demand explodes every quarter, doubling the token thirst. Supply? Shovels through paperwork hell, permit purgatory, and wet cement molasses.

UAE’s betting deserts, but sandstorms and 50°C heat add to the“fun.” China builds fast(ish), but export bans keep it caged.

Result: token famine. You want inference at scale? Queue up—or pay black-market premiums.

Don’t be fooled by renderings of gleaming server farms. These are multi-year marathons in a sprint race. Tokens wait for no builder.

Infrastructure Imperialism: Who Owns the Tokens?

So you’ve seen the token pinch: TSMC chip gambles, grid blackouts and datacentre build out mired in molasses. But don’t think for a second this is just corporate whining. Nations are waking up to the prize—tokens as nukes for the technological era.

Own the hardware, own the power. Think oil cartels of the 70’s, but for intelligence. US bans exports, China stockpiles silicon, UAE vacuums sand for servers. No more “global commons”—it’s a sovereign scramble to the top! Hyperscalers beg for fabs and megawatts; governments hoard ’em like gold.

Here’s the playbook: who’s racing, what’s the turf, and why your tokens hang in the balance. Buckle up—the token war’s going global.

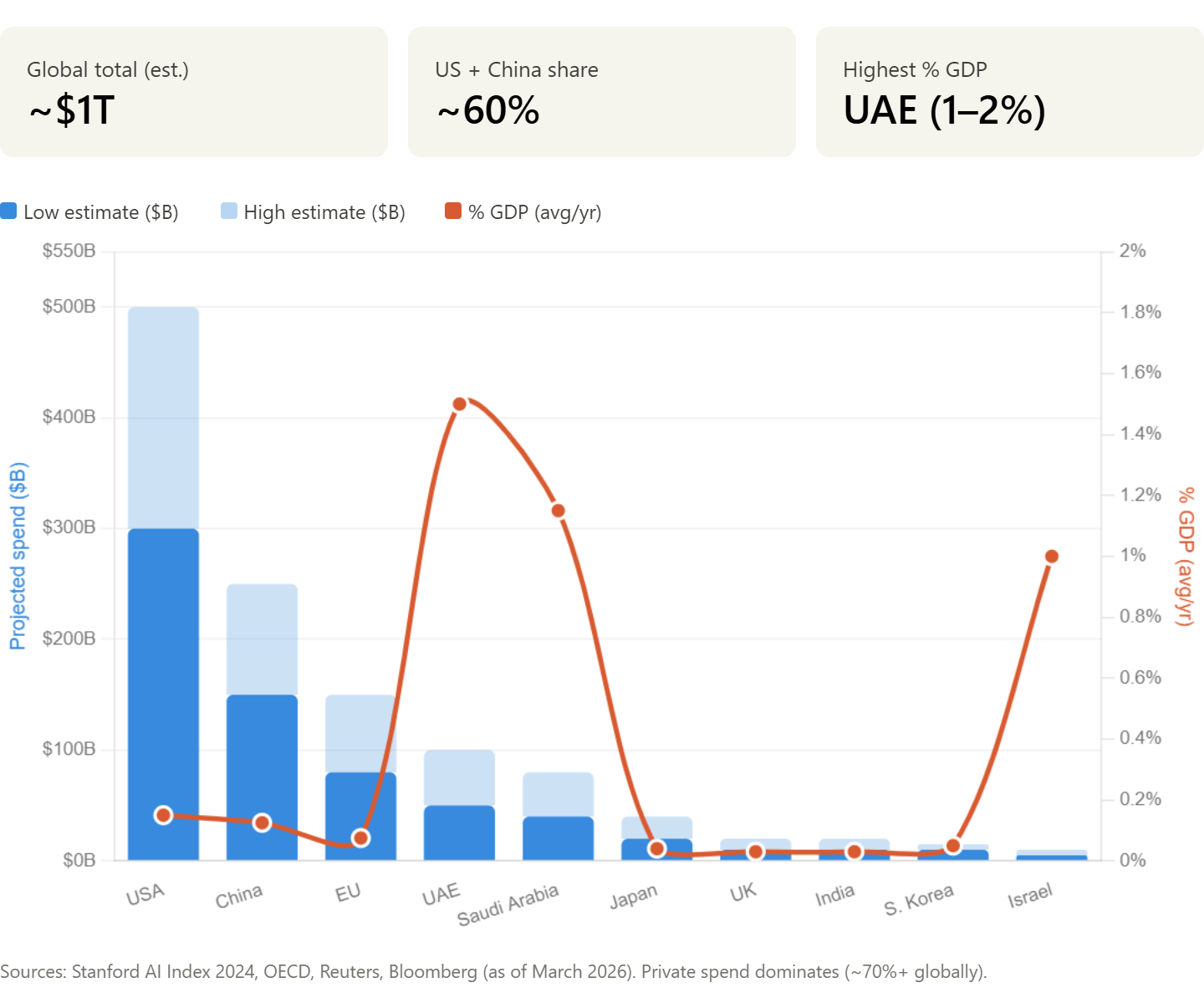

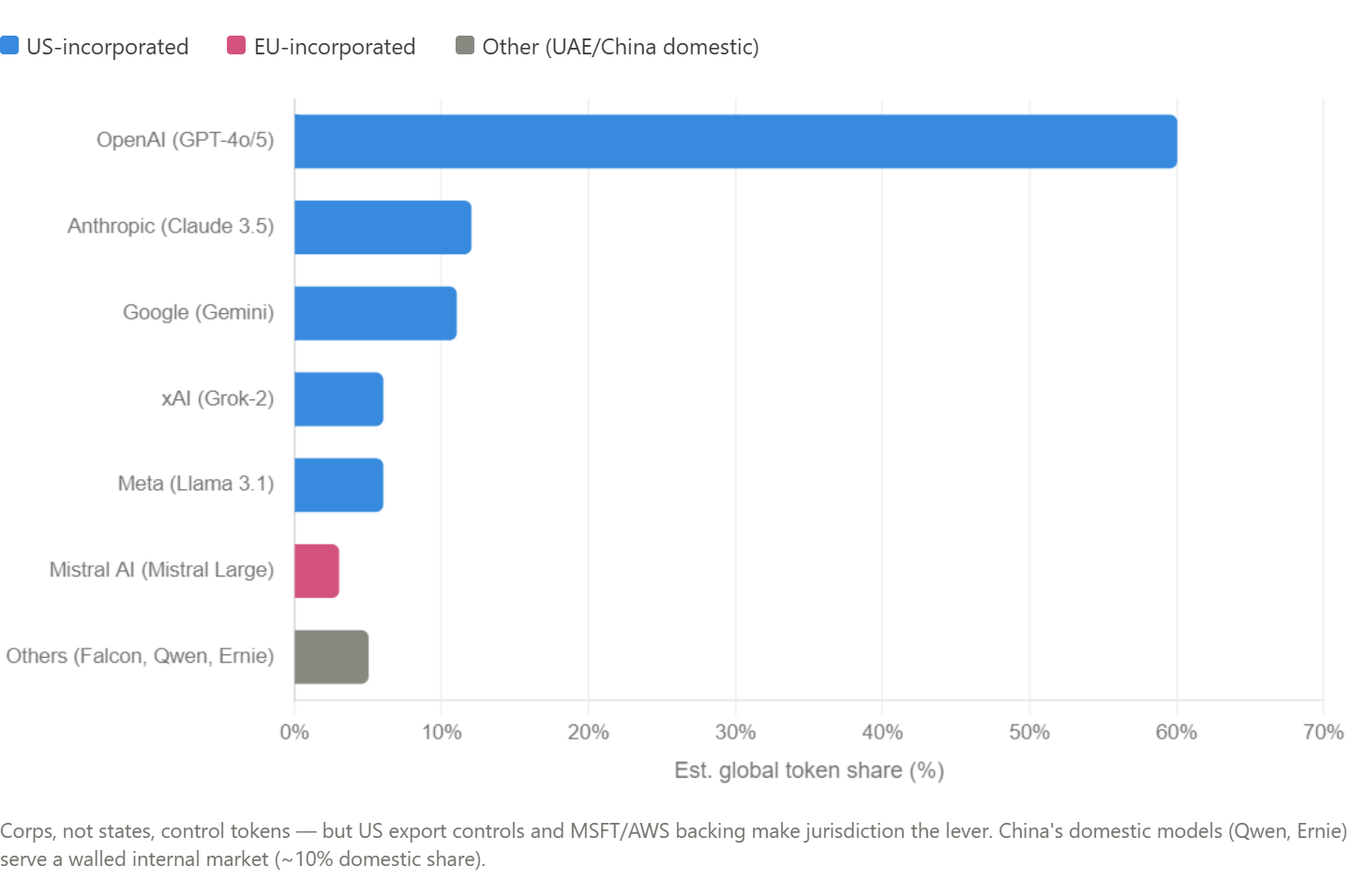

The stacked bars show the spending range (solid blue for the low estimate, lighter blue for the upper range), and the coral line overlays the percentage of GDP — which is where the story gets interesting. The US and China dwarf everyone in raw dollars with their combined spend making up to 70% of global spending, but the UAE and Saudi Arabia are committing a far bigger slice of their economies to this race. Israel is the other outlier on the GDP line, punching well above its weight relative to national output.

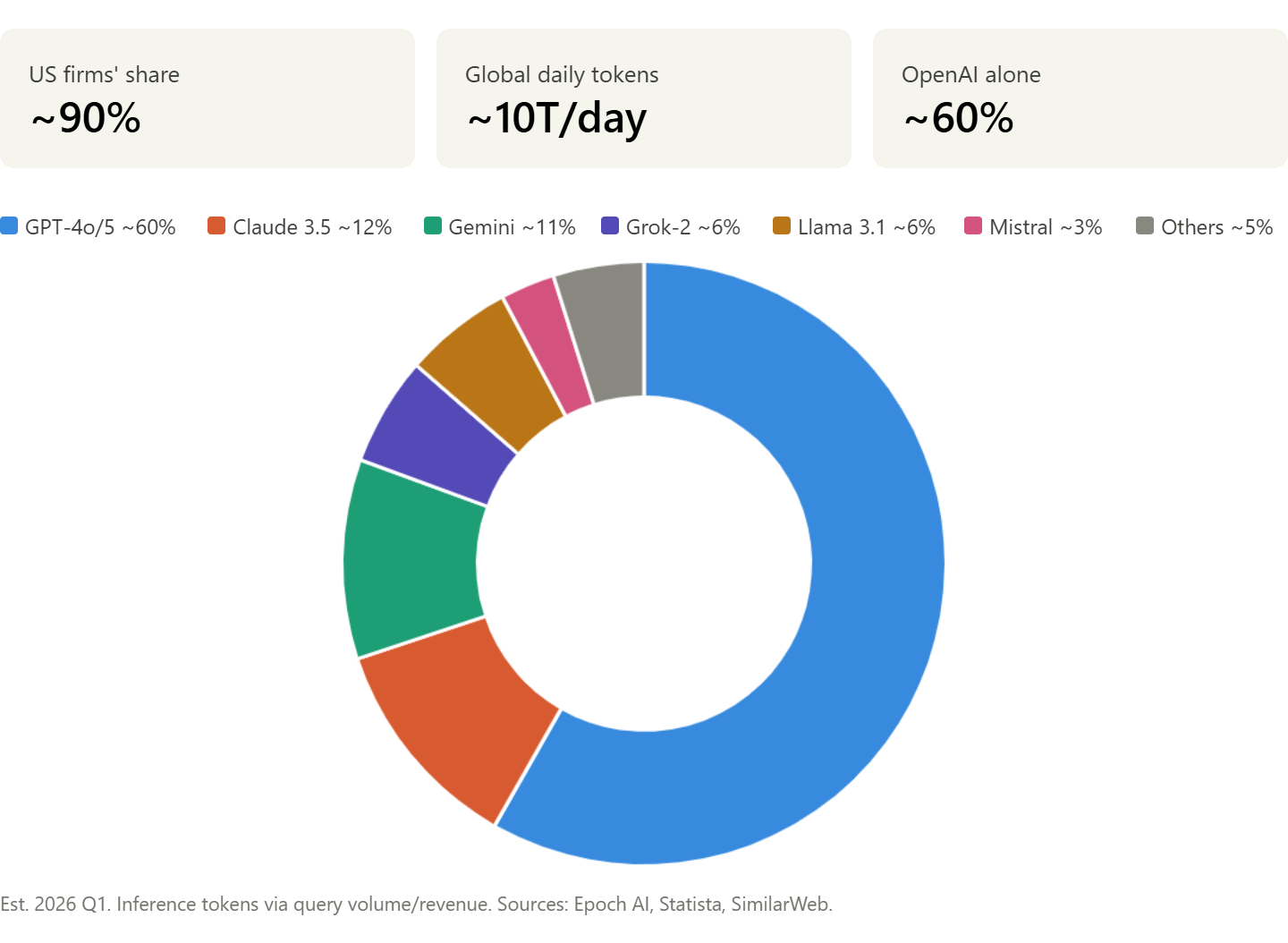

If we take a look at the current marketshare of the inference tokenenomy it’ll give us an idea of exactly who is dominating right now.

Here is a chart dividing up the current largest LLM models by marketshare.

Let us now see what country ultimately these different models belong too:

At a glance we can see that OpenAI alone commands around 60% of global frontier inference tokens. Add Anthropic, Google, xAI, and Meta and you're at 95% — all US-incorporated, all backed by the same handful of hyperscalers. That's not a market, it's an oligopoly. Five companies, three major backers (Microsoft, Amazon, Alphabet), one country's legal jurisdiction. If the US government wanted to turn the tap off for any nation or entity, it could do it tomorrow through export controls and corporate leverage. It doesn't need to own the models — it owns the environment they exist in.

Looking at these charts however we could easily be misled into believing OpenAI were monopolizing the tokenenomy. So let us dig a little deeper into backer concentration behind the scenes. Microsoft has its fingers in both OpenAI (49%) and Mistral. Amazon backs Anthropic. Alphabet owns Google DeepMind outright. So even the apparent competition between model families is largely funded by three cloud providers who also control the infrastructure those models run on — Azure, AWS, and GCP. They're not just investing in AI companies, they're vertically integrating the entire stack: chips to cloud to model to end user. The token economy isn't just US-dominated, it's hyperscaler-dominated.

But there is more, do we really believe China is as absent from the global picture as these charts would let us believe? Baidu's Ernie and Alibaba's Qwen serve a domestic market behind the Great Firewall, with Ernie holding roughly 10% of China's internal market. But that compute is walled off — it doesn't participate in the global token economy and it runs on Huawei Ascend chips rather than NVIDIA hardware. China has effectively built a parallel token economy, smaller and less capable at the frontier, but sovereign. The question is whether that parallel system can close the gap, or whether export controls will keep it permanently behind.

Meanwhile it seems the EU and UK are taking a back seat in joining the tokenonomy. Sure the EU Chips Act looks impresive—on paper, but paper doesn’t produce inference and as we’ve already laid out the bottlenecks. If Europe and the UK want to join the party they appear to be aiming for being incasually late. Right now in terms of actual tokens being minted out of this region it seems to be little more than a rounding error.

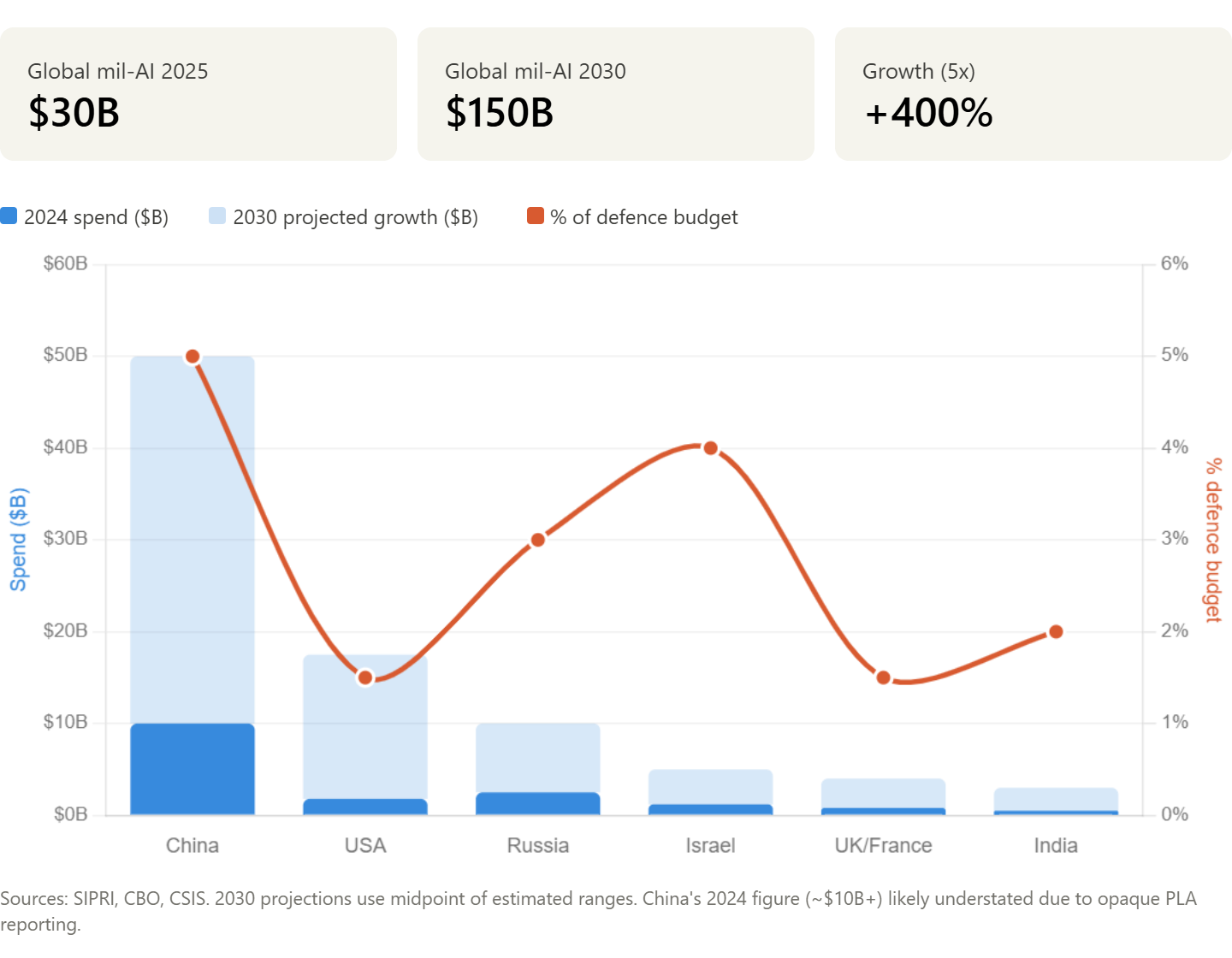

Defense Dollars Fuel AI Ascendancy

Nations aren't just hoarding chips and clusters—they're pouring billions into AI for the battlefield. The U.S. Department of Defense alone budgeted $1.8 billion for AI initiatives in FY2025, with projections hitting $2.5 billion by 2027 as programs like JAIC and Replicator scale drone swarms and predictive warfare. China counters with an estimated $10 billion+ annual military AI push, embedding neural nets in hypersonics and surveillance. Europe trails is picking up speed: the UK's £1 billion AI defense fund and France's SCORPION program signal a transatlantic wake-up. This isn't R&D fluff. It's compute captured for kill chains—tokens trained on classified data to outthink adversaries. As militaries bid up GPUs and datasets, civilian innovation starves, turning the token war hot.

Who controls the most advanced models controls the outcome.

This chart should be a wake-up call to anyone still under the illusion that China isn’t keeping pace with the Western hyperscalers. Sure, their domestic token economy looks small on paper — but that fits right in with everything else China does: suppressing the flow of information to its own people. We’ve seen it with how they’ve restricted internet access behind the Great Firewall. We’ve seen it with how they manage their television and broadcast networks. AI access for ordinary citizens will be no different — rationed, filtered, and controlled.

But military use? That’s a different story entirely. China has no qualms about pouring compute into defence. While the domestic token economy stays deliberately small and walled off, the PLA’s AI budget tells you exactly where the real investment is going. The public-facing numbers are modest by design. The military numbers are anything but.

And the growth multiples hammer the point home. The US goes from $1.8B to roughly $17.5B — a tenfold increase, and that sounds dramatic until you look east. China goes from $10B to $50B. And that $10B starting figure? Almost certainly understated. PLA reporting is about as transparent as a brick wall. The real number could be significantly higher, and we’d never know until the hardware showed up on a battlefield or a satellite image.

Then there’s Russia. A trajectory from $2.5B to $10B doesn’t grab headlines the way China’s numbers do, but here’s what makes it dangerous: every rouble of that spending is being shaped by real-world battlefield feedback from Ukraine. While the US runs simulations and China war-games Taiwan scenarios, Russia is field-testing AI-driven drones, autonomous ground systems, and attrition warfare tactics against a live, adaptive enemy. That makes their military AI programme arguably the most battle-hardened on the planet. They’re not theorising about what works. They already know.

As of March 2026, numerous military AI projects have crossed the line from PowerPoint to deployment. The US, Israel, China, and India are all fielding live systems — surveillance platforms, target identification networks, autonomous "loyal wingman" drones, and AI-driven decision support tools that are actively shaping operational outcomes. Recent conflicts have only accelerated the timeline. US strikes in Iran and the 2025 India-Pakistan war didn't just use conventional weapons backed by AI — they proved the concept under fire and gave every defence ministry on Earth the justification to double down.

So, what goodies do they have in store for the World.

US Military Projects:

Project Maven (2017–present): What started as drone imagery analysis using machine learning for object detection. Evolved into Maven Smart System (with Palantir), integrating data from satellites, drones, and sensors for real-time targeting. Designated a "program of record" in March 2026 for all military branches; used in thousands of Iran strikes and operations in Iraq, Syria, Ukraine, Yemen. Incorporates Anthropic's Claude for simulations and compliance.

Collaborative Combat Aircraft (CCA) / Loyal Wingman: Perhaps nothing illustrates where military AI is heading better than the US Air Force's Collaborative Combat Aircraft programme — what most people know as the "loyal wingman."

The concept is deceptively simple: instead of sending a $100 million manned fighter into contested airspace alone, you pair it with a fleet of AI-controlled drones that fly alongside it. They scout ahead, jam enemy radar, carry extra weapons, absorb the hits the pilot can't afford to take, and if one gets shot down, you've lost a $25 million airframe instead of a pilot and a fifth-generation jet. The pilot stops being a lone warrior and becomes a battle manager, commanding a swarm of autonomous wingmen from the cockpit.

DARPA Artificial Intelligence Reinforcements (AIR): If the CCA programme is about giving pilots a pack of AI-controlled wingmen, DARPA’s AIR programme — Artificial Intelligence Reinforcements — is about making those wingmen think for themselves when nobody’s around to hold their hand.

AIR is the direct successor to the ACE programme that produced the Alpha Dogfight trials. Where ACE proved an AI could win a close-range dogfight, AIR is tackling the harder problem: beyond-visual-range combat. That’s the real world of modern air warfare — engagements happening at distances where you can’t see the enemy, where decisions are made on sensor data, electronic warfare signatures, and probabilistic models of what the other side might do next. No Top Gun theatrics. Just algorithms deciding who fires first and whether the shot will connect.

Israel Military Projects:

Lavender: Gaza War (2023+): Israel’s Lavender system showed the world what happens when you point AI at a population and tell it to find enemies.

Lavender is an AI-powered targeting database developed by Unit 8200, Israel’s signals intelligence division. It was deployed during the Gaza war from October 2023 onward, and its job was simple: sift through surveillance data on virtually every person in Gaza — phone records, social media activity, movement patterns, social network connections — and assign each one a probability score of being affiliated with Hamas or Palestinian Islamic Jihad. At its peak, the system generated a kill list of 37,000 people.

Habsora / Gospel: Lavender identified the people. Gospel identified where to send the bombs.

Gospel — known internally as Habsora — is the other half of Israel’s AI targeting apparatus. Where Lavender built kill lists of individuals, Gospel processes surveillance imagery, intercepted communications, and sensor data to flag buildings, infrastructure, and locations as military targets. Command posts, weapons stores, rocket launchers, private homes of suspected operatives — Gospel generates the recommendations, a human analyst rubber-stamps them, and the coordinates go to the air force.

The speed is the point. Before Gospel, Israeli intelligence officers could produce roughly 50 vetted targets in a 300-day cycle. Gospel generates 100 a day. By early November 2023, the IDF reported that Gospel’s target administration division had identified over 12,000 targets in Gaza. By mid-December, the military said it had struck more than 22,000 — a daily rate more than double that of the 2021 conflict.

That pace isn’t a byproduct. It’s the design philosophy. Gospel exists to ensure the air force never runs out of things to bomb. And in Gaza, it didn’t disappoint — an effectively inexhaustible supply of targets in one of the most densely populated places on Earth.

China Military Projects

AI Military Commander (2024): China, meanwhile, isn’t just building AI weapons. They’re building an AI general.

In May 2024, researchers at the PLA’s Joint Operations College revealed they’d created what they call a “virtual commander” — an AI system designed not to fly a drone or pick a target, but to command entire wars. Caged inside a laboratory in Shijiazhuang, this AI has been granted supreme command authority in large-scale PLA war games spanning all military branches: army, navy, air force, the lot. It identifies threats, devises strategy, adjusts plans on the fly, and makes operational decisions — all without a human in the loop.

What makes it genuinely unsettling is the design philosophy. The AI doesn’t just crunch data. It’s been built to think like a specific human commander, complete with personality traits and deliberate flaws. It can wear different avatars: one mode emulates General Peng Dehuai — aggressive, risk-taking, the man who blindsided US forces with lightning strikes during the Korean War. Another channels General Lin Biao — cautious, meticulous, never committing until the odds are overwhelmingly stacked. The researchers even capped its memory to simulate human forgetfulness, forcing it to discard old knowledge and work with incomplete information, just like a real officer under pressure.

And the purpose is barely disguised. The PLA has operational plans for Taiwan and the South China Sea. They don’t have enough senior commanders available to war-game every scenario at scale. The AI solves that problem — running simulations endlessly, learning from each defeat, refining its approach, and never needing to sleep. By 2025, a separate team at Xi’an Technological University had plugged DeepSeek’s large language model into the same concept, generating 10,000 battlefield scenarios in 48 seconds — a task that would take a human commander 48 hours.

That represents just one confirmed project from China. Given their military spending in AI and track record of parallel development initiatives, this is likely one piece of a much larger strategic puzzle they’re solving.

Russian Military Projects

Marker UGV: The Marker is a three-tonne unmanned ground vehicle built by Androidnaya Tekhnika, a robotics outfit under the umbrella of Russia's Advanced Research Foundation. Development started in 2018, and by February 2023, four units were deployed to the Donbas. It's a modular platform — swap out the payload module and it goes from reconnaissance to anti-tank to drone carrier. Mount Kornet missiles and it kills armour. Bolt on a UAV cluster launcher and it can deploy up to 100 kamikaze drones from a single chassis. Its neural network-driven vision system identifies targets autonomously — people, vehicles, drones — and its turret can rotate 540 degrees in a second.

It sounds pretty impressive on paper but in reality it hasn’t proven as formidable as you’d think. In March 2024, a platoon of Russian Courier UGVs armed with grenade launchers attempted a coordinated assault near Berdychi — the first time ground robots had been used for a direct frontal attack in the war. Ukrainian FPV drones wiped them all out on camera.

But Russia keeps iterating. That's the thing about having a live laboratory. By late 2024, Rostec — the state defence conglomerate — was integrating its Prometey autonomy system across multiple platforms, converting existing vehicles like the BMP-3 into semi-autonomous systems and testing a growing zoo of purpose-built UGVs for everything from mine-laying to casualty evacuation to thermobaric strikes. The feedback loop is measured in weeks, not years. Something fails on a Tuesday, the design is updated by Friday, and a modified version is back at the front by the following month.

Lancet Loitering Munition: Built by ZALA Aero — a subsidiary of Kalashnikov — the Lancet is a loitering munition. Basically a kamikaze drone. It weighs 12 kilograms, carries a shaped-charge or fragmentation warhead, loiters over the battlefield for up to an hour at 110 km/h, and when it finds what it’s looking for, it dives and detonates on impact. No recovery. No reuse. One drone, one target, a big bloody mess!

The numbers from Ukraine are staggering. By February 2025, ZALA officially confirmed over 3,000 successful strikes — 353 tanks, 111 air defence systems, 60 multiple launch rocket systems, and hundreds of Western-supplied howitzers including M777s, CAESARs, and Krabs. Independent tracking puts the total beyond 3,600 documented hits, with the real number almost certainly higher. In May 2024 alone, Russian forces recorded 303 Lancet strikes in a single month. The claimed hit rate against armoured targets sits at roughly 78%.

What makes the Lancet genuinely dangerous isn’t the explosive. It’s the brain. The latest variants are equipped with AI-powered optical recognition that can identify and lock onto camouflaged targets autonomously.

Poseidon (Status-6): And then there’s the weapon that makes everything else on this list look like a highschool science fair project.

Poseidon — originally leaked under the codename Status-6, known to NATO as Kanyon — is a nuclear-powered, nuclear-armed autonomous underwater drone. Not a torpedo in any conventional sense. It’s 65 feet long, weighs 100 tonnes, carries its own compact nuclear reactor, and has a reported range of 10,000 kilometres. It doesn’t need to surface. It doesn’t need refuelling. It can sit dormant on the ocean floor for months, then activate, navigate autonomously to a coastal city on the other side of the planet, and detonate a warhead estimated at anywhere between 2 and 100 megatons.

For context, the bomb that destroyed Hiroshima was 15 kilotons. The upper estimates for Poseidon’s warhead are roughly 6,600 times more powerful. Some Russian sources have described it as a cobalt-salted device — designed not just to destroy but to render vast areas radioactively uninhabitable for decades. Russian state television has claimed it could generate a 500-metre radioactive tidal wave capable of drowning entire coastlines. Independent physicists have pushed back on the tsunami claims — an underwater explosion disperses energy in all directions, not in the focused surge of a natural tsunami — but the raw destructive potential of the warhead itself is not in dispute.

Russia has many more projects in development and in the field—all impressive, but Poseidon is by far the biggest threat to its enemies. It is death incarnate.

UK Military Projects

DragonFire: The UK’s contribution to the military AI race is quieter than most, but it solves a problem that every navy on Earth is now facing: how do you stop a swarm of cheap drones without bankrupting yourself firing million-pound missiles at them?

DragonFire is a high-energy laser weapon — a 50-kilowatt beam that travels at the speed of light, costs £10 per shot, and can hit a target the size of a pound coin from a kilometre away. Built by a consortium of MBDA, Leonardo, and QinetiQ, it’s the first directed-energy weapon being developed for front-line service by a European nation.

The AI sits in the fire control. DragonFire’s targeting system uses advanced algorithms to detect, track, and hold the beam on a fast-moving target with sub-millimetric precision — all while compensating for atmospheric disturbance, platform motion from the ship, and the target’s own evasive manoeuvres. In trials at Porton Down in early 2025, the system tracked and engaged large numbers of small UAVs, including drones conducting high-speed manoeuvring. By October 2025, at the MoD’s Hebrides range in Scotland, DragonFire shot down drones flying at over 650 km/h — a UK first for above-the-horizon laser engagement. The system detected, tracked, locked on, and burned through the target structure until the drone fell out of the sky.

SG-1 Fathom: It started as a whale tracker. Now it hunts submarines.

The SG-1 Fathom is a two-metre, 60-kilogram underwater glider built by Helsing — a European defence AI company — in partnership with Blue Ocean, QinetiQ, and Ocean Infinity. It has no propeller. No engine noise. It moves by shifting its buoyancy, using its wings to convert vertical sinking and rising into a slow, silent forward glide at one to two knots. It can stay submerged for up to three months, patrol at depths of up to 1,000 metres, or sit motionless on the seabed as a listening node. It is, for all practical purposes, invisible to passive sonar.

The brain is what makes it dangerous. Each glider carries Lura — Helsing’s AI acoustic platform, built on what the company calls a “large acoustic model.” Think of it like a large language model, but instead of predicting the next word in a sentence, it’s predicting what made a particular sound in the ocean.

Pepertual Iteration

When we consider the real threat of Artificial Intelligence we focus on the Intelligence factor, but while this is accelerating humanities technological progress at speeds never seen before — it would be far less effective without this one very significant ingredient—Iteration.

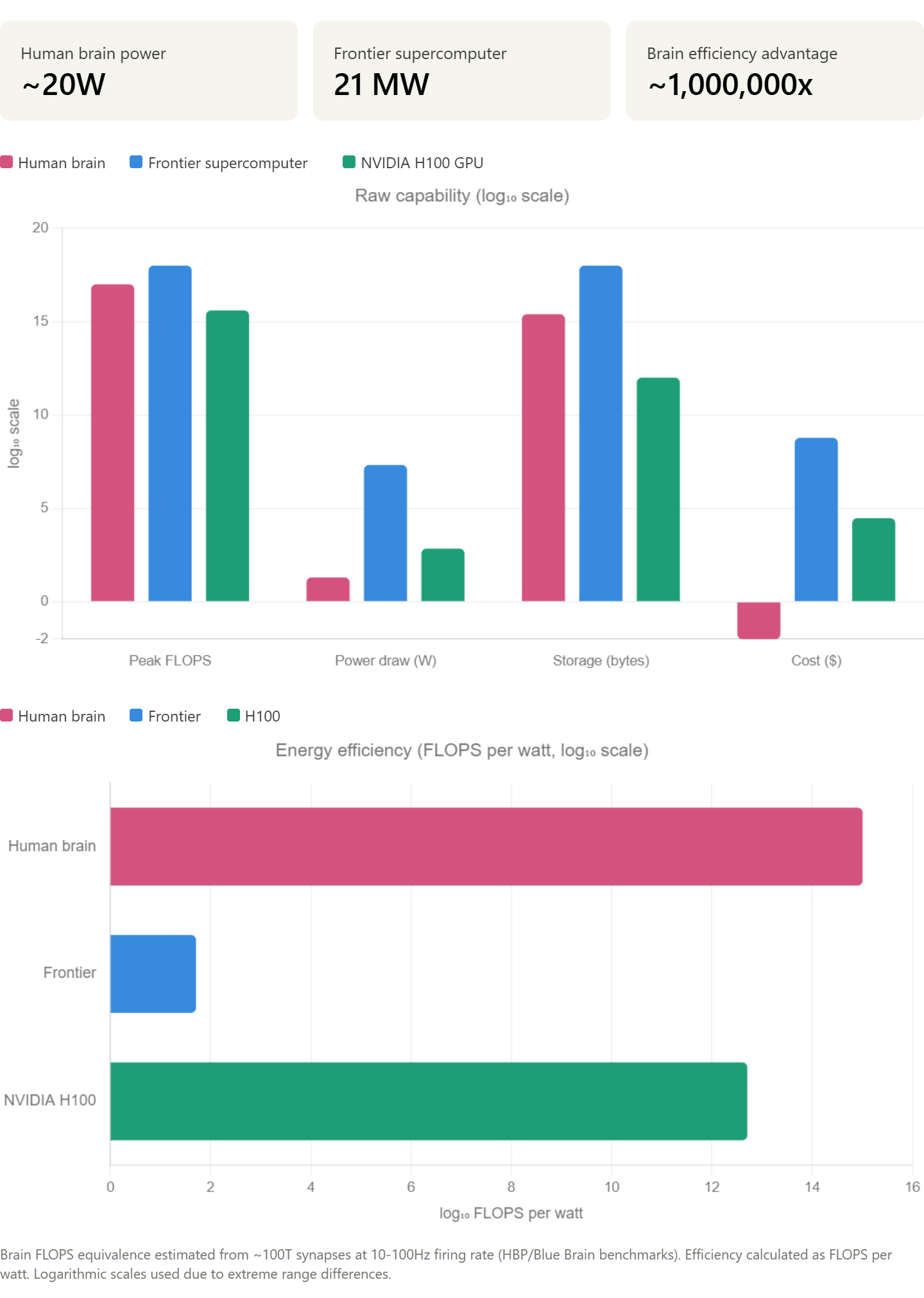

Humans learn by iteration from the moment our first few synapses form in the brain. We try, we fail, we iterate until the desired outcome is reached. We are good at it, but we are limited by the processing power of our brain.

The human brain is a marvel, and so far humanity has yet to create anything that can compete one-on-one with its own mental capacity. But where humans are not great fans of being bred by the millions in manufacturing plants and integrated into data centres to perform one task and iterate it 24/7 — an NVIDIA H100 GPU puts up less of a fight, asking only for some power and a little air con.

The brain is more efficient. The brain is more elegant. But the brain can’t do the one thing that makes AI dangerous: perpetual iteration.

A human learns through experience — slowly, linearly, one lifetime at a time. An AI model learns through iteration: rapid, relentless cycles of train, test, refine, repeat. Each cycle makes the model marginally better. Then the better model generates better training data, builds better tools, and identifies better shortcuts — which feeds the next cycle. The improvement compounds. What starts as a straight line bends into a curve, and the curve goes parabolic.

This is the dynamic that AI researcher Rich Sutton called the “Bitter Lesson” — the uncomfortable truth that brute-force scaling of compute and data almost always beats clever hand-engineered approaches. It doesn’t matter how elegant your algorithm is. Given enough iterations, raw compute wins. Every time.

The numbers back it up. In 2020, researchers at OpenAI published scaling laws showing that doubling compute yields roughly double the model performance in a predictable, log-linear relationship. That relationship held from GPT-2 to GPT-4 to o1 — each generation not just incrementally better but qualitatively different in capability. GPT-2 could string sentences together. GPT-4 passed the bar exam. That’s not linear progress. That’s what compounding iteration looks like when you pour exponential compute into it.

And the feedback loops are getting tighter. Early models improved through human-labelled training data — expensive, slow, limited by the number of people you could hire to sit in a room and rate outputs. Then came RLHF — reinforcement learning from human feedback — which let models like ChatGPT refine themselves based on what users actually found useful. Now we’re entering the era of agentic self-improvement: models like o1 that can debug their own reasoning, test their own outputs, and iterate on their own performance without waiting for a human to tell them what went wrong. The human is being removed from the loop, and the loop is speeding up.

The trajectory is a hockey stick.

Before 2010, AI compute was growing slowly — roughly in line with Moore’s Law. Then the Transformer architecture arrived in 2017, and the curve broke upward. By 2026, Epoch AI estimates that frontier model training runs are hitting 10²⁵ FLOPs — a billion times more compute than was used in 2010. That’s not sixteen years of steady progress. That’s sixteen years of compounding iteration, each cycle feeding the next, with the doubling period getting shorter every generation.

This is why the token wars matter. This is why nations are hoarding compute, why chip fabs are strategic assets, and why control of the GPU supply chain has become a geopolitical flashpoint. The race isn't just about who has the best model today. It's about who can iterate fastest — because in a system governed by compounding returns, a small lead in iteration speed becomes an insurmountable advantage within a handful of cycles. Whoever controls the compute controls the iteration rate. Whoever controls the iteration rate controls the curve. And the curve is heading somewhere none of us have been before.

Inevitably with iteration comes AGI (Artificial General Intelligence) pretty to darn quick paticularly when you consider how far models have come in just a few years.

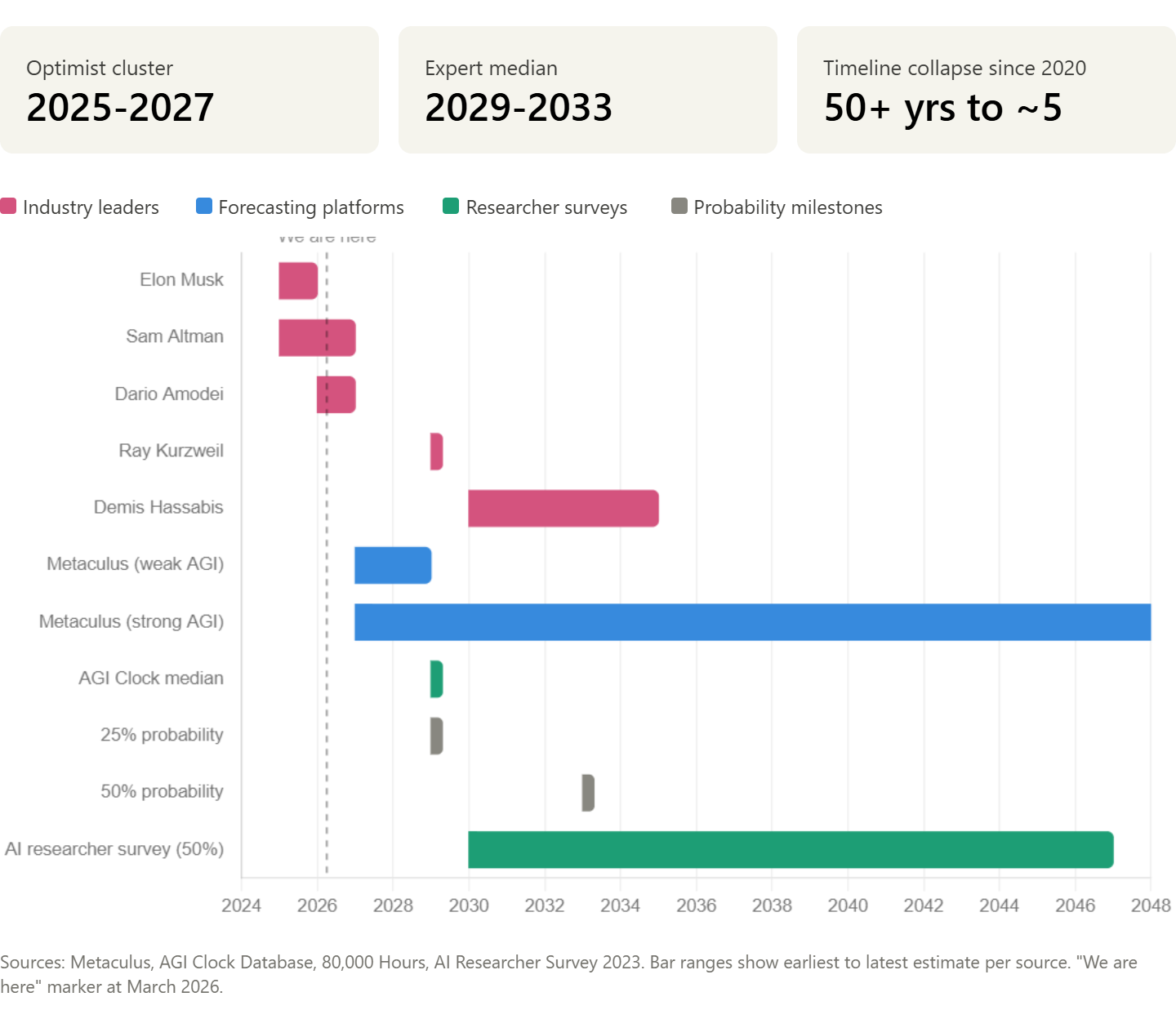

The story the chart tells is striking. The optimists — the people actually building these systems — are clustered in a tight band between now and 2029. The broader forecasting communities and researcher surveys stretch further out, but even their medians have collapsed dramatically. In 2020, Metaculus had AGI at 50+ years away. Now it’s sitting at around 2032. That’s not a gradual revision — that’s a timeline that fell off a cliff.

The “we are here” line sitting right at the leading edge of the optimist cluster is the uncomfortable bit. If Musk, Altman, or Amodei are even roughly right, we’re not looking at AGI as a future problem. We’re looking at it as a present one.

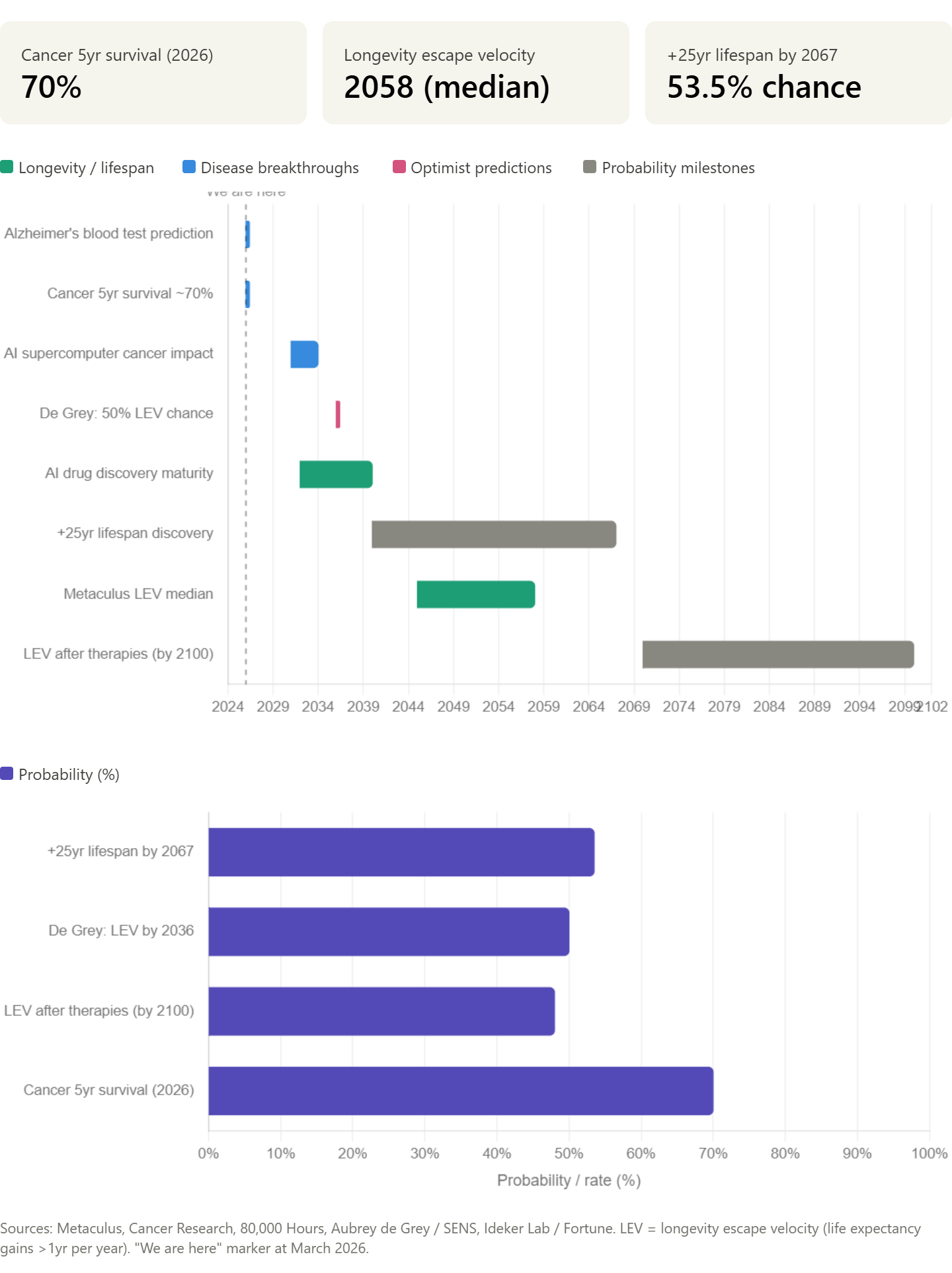

The top chart maps when each breakthrough is predicted to land, from what’s already happening now through to longevity escape velocity predictions stretching toward the end of the century. The bottom chart shows the probability estimates — and the striking thing is how tightly they cluster around 50%. The research community is essentially calling it a coin flip on whether we crack the longevity problem within most of our lifetimes.

The gap between “we are here” and the first longevity milestones is the AI drug discovery window — where the $1B US bet on AI supercomputers for cancer sits, and where protein folding and molecular modelling are expected to start producing clinical results rather than just papers. If the AGI timeline chart we made earlier is right, general intelligence arriving in the late 2020s to early 2030s could compress these longevity timelines dramatically.

Hardware Horizon: Robots in the Token Race

But here’s why this matters to the token wars: robots need tokens. Lots of them. Every autonomous robot—whether Tesla’s Optimus, Figure AI’s humanoid, or military drones—relies on continuous neural inference for perception, planning, and action. And the token hunger is real.

Current autonomous robots burn between 10 to 1,000 tokens per second of real-time operation, depending on the model and task. Vision dominates the token load (60-80% of usage), because every camera frame gets encoded into 100-1,000 tokens before the AI can reason about what it’s seeing. Planning loops add another 10-100 tokens per action decision. A single Tesla Optimus robot (running custom 7-billion-parameter models with video processing at 30fps) consumes roughly 50-200 tokens per second during active operation—translating to 10-50 million tokens per day just for one unit. Scale that to Figure AI’s Llama-based 70B models with vision, and a single humanoid burns 5-20 million tokens daily. Military drones running edge LLMs for swarm planning and targeting push even higher: 10-100 million tokens per day.

Now multiply. Tesla targets one million units per year by late 2026. Boston Dynamics is tooling up for 30,000 Atlas units annually. Chinese manufacturers are flooding the market with competitors at $13,500 a unit. Even at conservative adoption rates, we’re talking about billions of autonomous robots deployed globally within a decade. If each one demands 10-100 million tokens daily, the cumulative inference load becomes staggering: tens of trillions of tokens per day across the robot fleet alone. That’s on top of the hundreds of trillions already demanded by ChatGPT users, Google searches, and enterprise AI systems.

The bottleneck isn’t just raw token capacity—it’s edge compute. Most commercial robots run lean: 10-100 watts onboard, forcing reliance on distilled models like Phi-3 (3.8B parameters) that can squeeze out only 50 tokens per second. That’s fast enough for factory floors, but inadequate for complex, unpredictable environments. Humanoid robots destined for homes can’t afford cloud latency; they need real-time reasoning. This forces a tragic trade-off: either robots stay dumb and repetitive (good for factories, useless for homes), or they eat enormous token budgets chasing cloud inference, bottlenecking against the same token scarcity everyone else is fighting over.

The result: whoever controls token supply controls robot capability. A robot deprived of tokens is just an expensive paperweight with servos. One drowning in tokens is approaching AGI in physical form. This isn’t metaphorical—military planners know it. China’s PLA isn’t just building AI generals; they’re securing token pipelines to power autonomous swarms. The US defense budget reflects the same priority.

Whoever secures compute compute secures robot dominance. Whoever secures robot dominance secures economic and military supremacy..

The Token War is Coming—it is inevitable

Whether we like it or not, AI is here. It’s not going to iterate itself out of existence — well, not before humanity at least. The rolling headlines of “The AI Bubble is About to Burst!” and “Don’t Fall for the AI Hype” — yada yada — are not wrong, but they are deceptive. Yes, as with every major disruptive technology, bubbles form as over-enthusiasm and opportunists trip over themselves to capitalise on the moment. We saw it with the internet. We saw it with Bitcoin. We’re seeing it right now with AI. But overleveraged opportunists failing miserably at producing something of value doesn’t mean the underlying technology is overhyped. The dot-com bubble burst in 2000 and killed a thousand garbage startups — but it didn’t kill the internet. Amazon survived. Google survived. The infrastructure that was laid during the mania went on to reshape civilisation. The same pattern is playing out now, except the technology underneath is orders of magnitude more transformative than anything that came before it.

I’d argue AI is underhyped. Not in the investment sense — there’s plenty of froth there — but in the public consciousness sense. Most people are still thinking about AI as a chatbot that helps them write emails or a novelty that generates weird pictures. They’re not thinking about autonomous weapons systems selecting targets in Gaza, or underwater drones hunting submarines for three months without surfacing, or humanoid robots replacing factory workers at $20,000 a unit, or nation states hoarding compute infrastructure like Cold War uranium.

The gap between what the public thinks AI is and what AI is actually doing right now — in military, in infrastructure, in geopolitics — is vast. And it’s widening every month.

By 2030, the world we live in will be unrecognisable. Every aspect of our lives — how we work, how we fight, how we heal, how we communicate, how we are governed — will be radically different to everything we knew just a decade before. The token economy will underpin all of it. Whoever controls the compute, controls the tokens. Whoever controls the tokens, controls the iteration rate. And whoever controls the iteration rate controls the future.

I’d like to say it will be for the better. And in many ways it will — AI-driven medicine, abundant clean energy, the automation of drudgery, the democratisation of knowledge—possibly the human tyrants allow it. But I’m not ignorant to the treacherous path that lies ahead for humanity to navigate safely. The same technology that cures cancer can select kill lists. The same infrastructure that powers your chatbot powers autonomous weapons. The same race for compute that drives innovation drives geopolitical confrontation.

The line between utopia and catastrophe has never been thinner, and we’re walking it at speed, in the dark, with no consensus on where we’re heading.

The token war isn't coming. It's already here. By the time most people realize what's happening, the key battles will already be decided—in labs, in fabs, in the dark server rooms where iteration happens at machine speed.

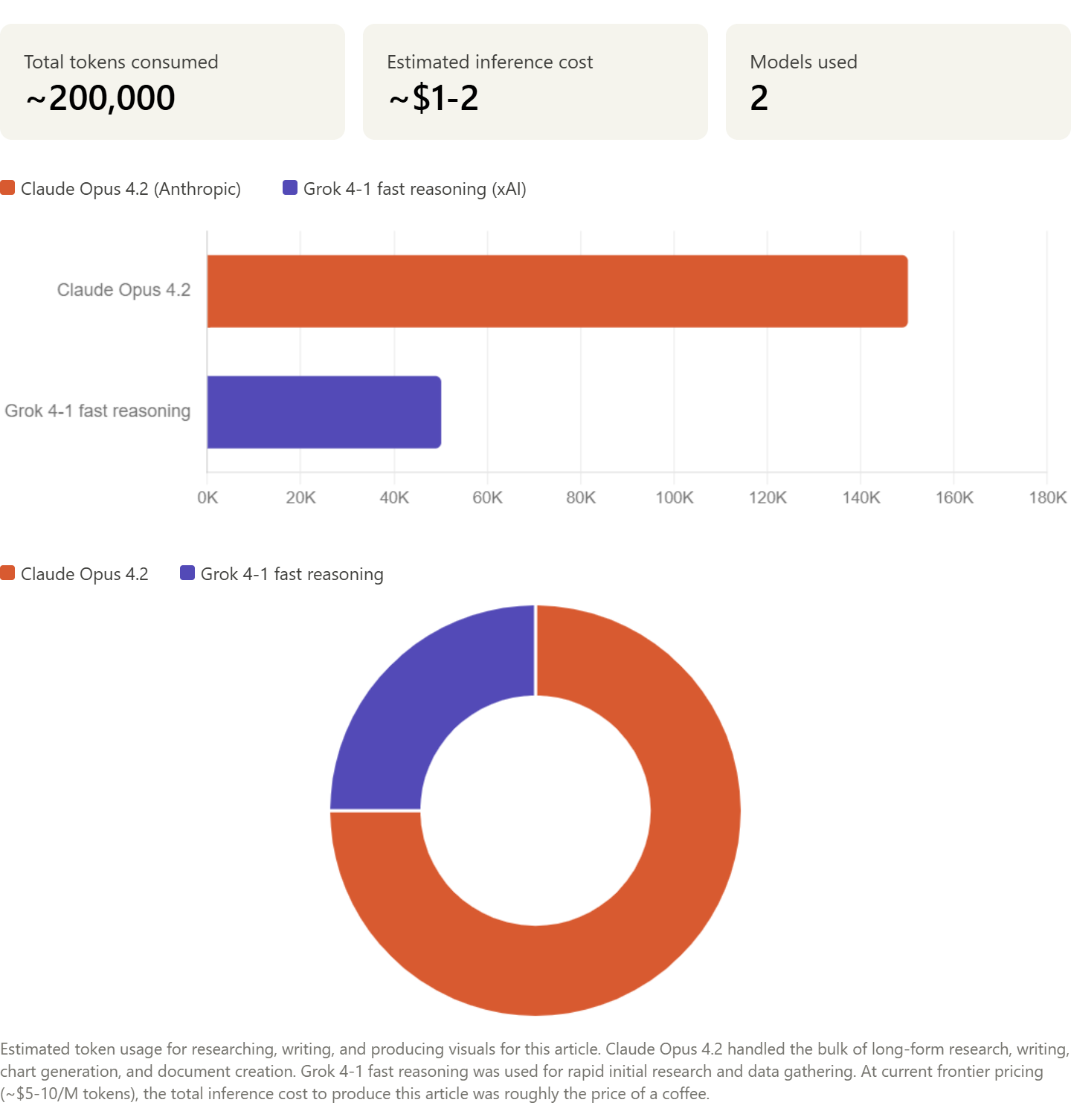

Article Inference report:

Roughly 200,000 tokens across two models to research, develop article framwork, expand on topic points, proofread and generate charts.

Claude Opus 4.2 did the heavy lifting at ~150K tokens (75% of total), with Grok handling the rapid initial research at ~50K.

At current frontier pricing, the entire article cost less than a cup of coffee in inference compute.